| |

|

No Smoke ......

No

smoke, no mirrors, no snake oil.

Just NonStop Services to suit you.

www.brightstrand.com

Digging for oil – DRNet is helping NonStop users strike it rich!

NTI has been in

business a very long time and has been providing support for NonStop

users with its DRNet product set. Among its many customers there

are numerous financial institutions relying on DRNet for data

replication. When it comes to supporting payments solutions, these

financial institutions cannot afford for their systems to be down for

any reason and so historically, these institutions have depended upon DRNet

to maximize their systems’ uptime, no matter what. However, DRNet

offers so much more than just support for disaster recovery and with as

much talk as there is today of maximizing the value locked away in the

data these financial institutions accumulate over time, the replication

capabilities of DRNet are being looked at again for DRNet

applicability to integrate.

In the post of April 2, 2018, reference is made to the recent article

published in the March – April, 2018, issue of The Connection - Data is

King: What NonStop Mission-Critical Applications Can Learn from Formula

1. “Of course we are all familiar with

the need to ensure data is never lost or taken offline for any reason,”

we said, quoting the article. “DRNet has been involved in moving

data for many decades now and is relied upon to ensure the degree of

availability and integrity IT demands.” However, probably more

importantly, “DRNet has been enhanced and is making a strong case

for consideration when it comes to data integration.”

What is often overlooked today is that NTI’s product, DRNet, is

an abbreviation for Data Resource Networking – a product name that

oftentimes doesn’t convey all that we do, even as the acronym has often

been mistaken for Disaster Recovery Networking. It’s very true that for

many of our customers, support for Disaster Recovery is the key

capability of DRNet that they capitalize on but when you consider

DRNet today, it is more about Disaster Avoidance, even as it is a

tool developed to get at the most valuable resource in IT – the data!

And fundamentally, when it comes to those payments solutions that have

been supported by DRNet over the years, this awaking awareness

about the value of data isn’t coming as a surprise to NTI. We protected

it in the past and now we are working to better integrate it with the

rest of IT!

In the April 2018 issue of the magazine, Digital Transactions, in

closing an article on Trends & Tactics, Richard Crone of Crone

Consulting, San Carlos, California, suggests “Data is the new oil.

Payment is the well.” It may be a bit dramatic but it does convey the

significance of data and the value it is providing those financial

institutions supporting payments solutions. After all, the transactions

that flow through their platforms include insights into their customers

and are along with transactions’ elements helping to predict customers’

future behavior. However, this transactional data is just one piece of

the picture – a jigsaw puzzle oftentimes – and as such it needs to be

integrated with data warehouses, data lakes and other related

technologies supporting today’s business analytics processing. Without

access to all the data, results from these analytics may end up skewed

improperly. DRNet is the best solution if you really want to

strike oil when looking at all the data you acquire with your payments

solution!

DRNet is all about networking data resources and while in the

past this property of DRNet has helped NTI address the

all-important issue of disaster avoidance, it is critical to recognize

just how valuable a role DRNet can play in future data

integration projects. Integration will be a highlight of NTI’s

presentation at the upcoming GTUG / European NonStop HotSpot -

Conference & Exhibition and should this be an area of interest for you

and your company, make sure you join us for this update on DRNet.

And remember too, visit the

NTI web site

for more information about DRNet – just check under the PRODUCTS

tab or reach out to the sales team at NTI via email

sales@network-tech.com

or simply call us at +1.614.794.6000

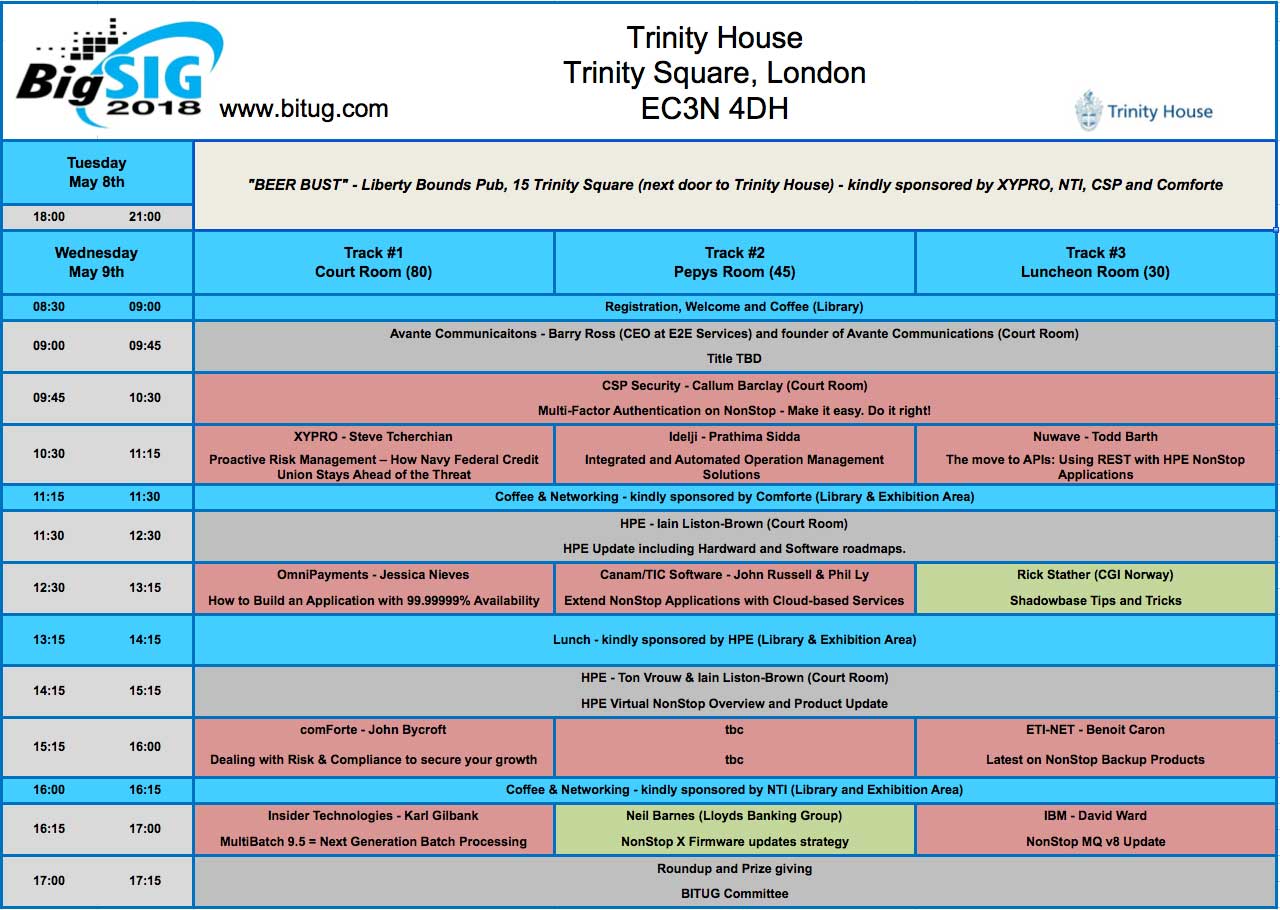

BITUG’s upcoming BIG SIG event in London on May 9, 2018

If

you haven’t been to BITUG’s “BIG SIG” event in recent time then you

really have missed out on participating in the premier gathering of the

NonStop community in the British Isles. It is always well supported by

the HPE NonStop team and attracts all of the major NonStop vendors – and

a new one this year!

For the British NonStop users this is simply the “must-attend” event of

the year when it comes to hearing that latest news from NonStop

development, product management and sales.

This year BITUG will be holding our "BIG SIG" on Wednesday, May 9th,

2018, in London and we welcome our BITUG users to come along to this

event. As this is the premier event of the year for the BITUG user

community, it has always proved popular with all NonStop stakeholders.

This year we have once again worked hard to ensure the agenda covers the

topics we know you have expressed an interest in – the times, for

NonStop, are most definitely, “a changing!’”

The event is being held at our previous location – the prestigious

Trinity House – Tower Hill, London, EC3N 4DH (http://www.trinityhouse.co.uk/th/about/index.html).

And it really is hard to argue against this venue with its panoramic

views of the Tower, Tower Bridge and the Thames and its close proximity

to many pubs and restaurants that are an integral part of life at this

end of town.

As

always with BITUG BIG SIG’s, this year’s event will be free of charge to

NonStop users, with morning tea/coffee breaks and lunch provided by our

Sponsor vendors. There will of course be the familiar Vendor exhibition

area with a full contingent of 12 vendors confirmed.

As

per previous BIG SIG events, we will again be holding a ‘Beer Bust’ the

evening before. This will be located at the Liberty Bounds pub – just a

stones throw away from Trinity House – on the evening on 8th

May from 19:00. All attendees of BIG SIG are welcome to come along for a

drink and finger food sponsored by the following vendors – XYPRO, CSP,

comforte, NTI and Rocket Software.

The Agenda is as follows: (but subject to change)

If

you would like to attend you need to complete registration by following

this link:

www.bitug.com/tickets

Please note: You need to complete a separate registration for each

attendee! (for example, if you have more than one attendee in same

company)

For now, we look forward to welcoming you!

On

behalf, of the BITUG Committee – Thanks and see you soon!

Of

course any questions or queries can be directed to anyone from the

committee or via the website page: (https://www.bitug.com/contact-bitug/)

New Article on

Hybrid IT from NuWave

Hybrid IT infrastructure has been a core part of

HPE’s strategy for a number of years now, and it’s easy to see why.

Across their product portfolio, they have made a massive investment in

public and private cloud infrastructure, developing dedicated hardware

and partnering with Microsoft Azure and others. But what does Hybrid IT

mean for the average NonStop installation?

As HPE NonStop users, we know that our platform and

applications typically house the most important parts of our enterprise

– the ATM system that must not deny transactions unnecessarily, the

manufacturing production line that must produce thousands (or millions)

of items an hour without fail, or the retail point of sale system that

must always be available to our customers. How does that mission

critical functionality link in with the cloud?

If you missed NuWave's article by Andrew Price in

Connect Now, check out "HPE

NonStop and Hybrid IT: The Best of Both World" to read the rest of

the article.

Availability Digest Reminds Us that “Openness Was Not Always Ensured”

Years ago, Digest Managing

Editor Dr. Bill Highleyman wrote a paper entitled “Can the Computer

Industry Truly Support Openness?” The paper made several assertions that

seem surprising in today’s technology. It noted, for instance, that

computer hardware vendors were reluctant to open their systems for true

interoperability with the products of competitors. After all, open

standards would make it easy for IT managers to switch products since

any product that complied with the standard could be substituted for

another such product.

However, openness abounds in today’s software technology and adheres to

open standards. This means that the software is free for use, with

source code defined by its community of developers and users. Java is

the open standard for programming languages. SQL is the open standard

for databases. And x86 is the open standard for system architectures.

The most prominent example of open software is Linux. Windows, by

contrast, is proprietary, or closed. This article discusses the concept

of openness and how technologies that embrace it will lead in the future

to the ultimate in operational efficiencies.

In addition to “Openness Was Not Always Ensured,” read the articles

below in the Availability Digest.

What is Reliability? – In the literature, the terms that are used to

describe computer system reliability are what we might describe as

“slushy.” There are no agreed-upon definitions – the user of such terms

is free to match them to his purpose, often with no attempt at a formal

meaning. There are certainly no legal definitions that would stand up in

court. In this article, we suggest a simple method to quantify system

reliability, eliminating any ambiguity or suggestion of marketing

intent. Our result is a table that compares different, highly reliable

technologies for IT systems.

Can Applications Achieve Seven 9s Availability? – Seven 9s availability

is an average of three seconds downtime per year. Can an application

achieve that? If it is only the application, seven 9s very well may be

possible, especially if the application has been “burned in” through use

over time. But if we are evaluating the availability of an application,

we must also take into account the hardware upon which it is running.

Typical hardware servers have availabilities of three to four 9s. Other

factors impacting availability include network failures, human error,

and environmental issues. Then there is the consideration of SLAs. When

a license agreement is negotiated, how clear is it that a claim of seven

9s should be attributed only to the application without its being

impacted by other system availabilities?

Data Deduplication in the Cloud – Data deduplication is a powerful tool

by which a specific block of data in a large database is stored only

once. If it appears again, only a pointer to the first occurrence of the

block is stored. Since pointers are very small compared to the data

blocks, significant reductions in the amount of data stored can be

achieved. With regard to cloud storage, moving an enterprise’s data to

the cloud is today a common practice. Cloud providers such as Amazon

with its S3 Simple Storage Services charge customers for the amount of

cloud storage they use. Therefore, the customer is incentivized to

minimize the amount of data storage that is consumed. Deduplication is a

popular method used to achieve this goal.

@availabilitydig – The Twitter Feed of Outages - Our article highlights

some of our numerous tweets that were favorited and retweeted in recent

days.

Digest Managing Editor Dr. Bill Highleyman will present “Old Geezer’s

Guide to What Lurks (but Still Works) in Your Data Center” at NENUG on

May 21, 2018, at HPE in Andover, Massachusetts. For event information,

https://bit.ly/2HvE2CN.

The Availability Digest offers one-day and multi-day seminars on High

Availability: Concepts and Practices. Seminars are given both onsite and

online and are tailored to an organization’s specific needs. Popular

seminars are devoted to achieving fast failover, the impact of

redundancy on availability, basic availability concepts, and eliminating

planned downtime.

In addition, the Digest provides a variety of technical writing,

consulting, marketing, and seminar services. Individuals too busy to

write articles themselves often hire us to ghostwrite. We also create

white papers, case studies, technical manuals and specifications, RFPs,

presentation slides, web content, press releases, advertisements, and so

on.

Published monthly, the Digest is free and lives at

www.availabilitydigest.com. Please visit our Continuous Availability

Forum on LinkedIn. Follow us as well on Twitter @availabilitydig.

Visit Gravic at GTUG’s European NonStop HotSpot –

Conference & Exhibition

We look forward to seeing

everyone at the

European NonStop HotSpot – Conference & Exhibition, hosted by GTUG

and Connect Germany on 14-16 May at the

Westin Hotel in Leipzig, Germany. On 15 May, we

are co-sponsoring a Partner Technical Update (PTU) breakfast meeting for

HPE Sales, Technical Services, and Solution Architects. HPE attendees

should

contact us for

additional information if they plan to attend and have not already

registered.

Please stop by the booth or attend our presentation,

New HPE Shadowbase Success

Stories (including Business Continuity, Data Integration, Zero Downtime

Migrations).

We describe numerous HPE Shadowbase success stories in several solution

categories:

We will also present, New

HPE Shadowbase Features that Solve Customers' Challenges (including Zero

Data Loss, DB2 as a source, transforming Enscribe data to industry

standard DBs).

We describe the recently

released HPE Shadowbase features that are helping our customers to solve

their business challenges

including:

-

Synchronous data replication that will deliver

zero data loss

during a business continuity event

-

Further improvements that simplify migrating from RDF or OGG to HPE

Shadowbase

-

DB2 as a source

for Shadowbase replication

-

Enscribe to SQL replication enhancements

-

Additional BASE24 feature support

-

Other new features and future product direction

Please

contact us if you are interested in

discussing either of our presentations’ content or having us share this

information with you and your colleagues.

Gravic is co-sponsoring the Porsche factory excursion on 17 May.

Attendees will visit the Porsche manufacturing plant – a NonStop user –

in Leipzig. Participants will take a guided tour through the production

lines where the cars are made to perfection, and then ride aside drivers

in a Panamera or a comparable Porsche on a speedy racetrack. We hope to

see you there!

Gravic

Publishes Article on the Advantages of Scale-out Architectures

Gravic recently published the article,

Scale-up is Dead, Long Live Scale-out!

in the March/April

issue of

The

Connection.

Keeping up with user demand is a

significant challenge for IT departments. The traditional scale-up

approach suffers from significant limitations and cost issues that

prevent it from satisfying the ever-increasing workloads of a 24x7

online society. The use of massively parallel processing and scale-out

architectures is the solution, since they can readily and

non-disruptively apply additional compute resources to meet any demand,

at a much lower cost, and with improved service availability. The use of

the HPE Shadowbase data replication engine to share and maintain

consistent data between multiple systems enables scale-out application

and workload distributions across multiple compute nodes, which provides

the necessary scalability and availability to meet the highest levels of

user demand now and into the future.

To speak with us about your

data replication and data integration needs, please call us at

+1.610.647.6250, or email us at

SBProductManagement@gravic.com.

Hewlett Packard Enterprise

directly sells and supports Shadowbase solutions under the name HPE

Shadowbase. For more information, please contact your local HPE

Shadowbase representative or

visit our website.

Please Visit Gravic at

these Upcoming 2018 Events

|

GTUG IT-Symposium—Leipzig,

Germany, 14-16 May |

|

NENUG Meeting—Andover,

MA, 21 May |

|

NYTUG Meeting—Berkeley

Heights, NJ, 23 May |

|

N2TUG Meeting—Dallas,

7 June |

|

VNUG Conference—Stockholm,

11-12 September |

|

ATUG Meeting—Atlanta,

19 September |

|

MEXTUG Meeting—Mexico

City, TBD September |

|

CTUG Conference—Toronto,

26-27 September |

|

Connect HPE Technical Boot Camp—San Francisco, 11-14

November |

Last Chance to Sign

Up for NYTUG and NENUG!

Deadlines are approaching for the NYTUG (New York

area Tandem User Group) and NENUG (new England NonStop User Group. Both

events have a focus on HPE education this year, so each one has 3 hours

of exciting announcements and fresh content from HPE.

NENUG will be held on Monday, May 21st. Sign

up now on the NENUG

Eventbrite page

NYTUG will be held on Wednesday, May 23rd. Sign up

now on the NYTUG

Eventbrite page

We are also planning a fun networking event on

Sunday, May 20th that will include a tour of Fenway Park and lunch at

Boston Beer Works. If you are interested in attending, please email

itbulletin@nuwavetech.com by Friday, April 27th.

Ask TandemWorld

Got a question about NonStop ?

ASK Tandemworld

Keep up with us on Twitter @tandemworld

We are currently seeking skilled resources

across the EMEA region,

contact us for More Info

www.tandemworld.net

Ten Years of CommitWork Developer Suite (CDS)

CDS

allows traditional NonStop programmers to use the modern Eclipse IDE

with COBOL85, Screen COBOL or TAL without changing their underlying

development environment. This means, that customers can use their

existing compile macros and commands of the GUARDIAN environment.

Features and

benefits

-

There is

no need to change to Cross Compilers.

-

No need

of OSS!

CDS can be used in an only GUARDIAN Environment.

-

Comfortable COBOL Editor with syntax highlighting, keyword assistant,

folding regions, outline view of sections for quickly navigation,

customizable coloring.

Showing compile warnings and errors inside the editor.

-

Starting

customers host compile process.

-

Timestamp

validation between local and host file versions.

-

Access

and visualization of spooler entries.

-

Using all

features of a modern integrated development environment, like

Software Repositories, History view of source code, task management and

many more features of existing eclipse plugins.

-

Young

people with eclipse experience can now enter more quickly into the

traditional world of NonStop.

-

TAL

Editor with syntax highlighting, keyword assistant, folding regions,

outline view of sections for quickly navigation, customizable coloring.

-

Additional SQL/MP Support inside the COBOL Editor.

-

DDL

Browser to find and list entries inside your Data Dictionary.

-

Secured

FTP transfer by using our additional FTPS Plugin.

-

New SFTP

Plugin

More Information

Have a look

inside our booklet at

http://www.commitwork.de/1/products/commitwork-developer-suite/

Contact:

For a free CDS

demo license, please contact:

CommitWork GmbH

Hermannstrasse 53-57

D-44263 Dortmund

Germany

Fon:

+49-231-941169-0

Fax: +49-231-941169-22

eMail:info@commitwork.de

www:

http://www.commitwork.de

Parsing JSON Messages in COBOL

JSON Thunder

from Canam Software Labs, generates COBOL and C source code for reading

and writing JSON messages. Using the Thunder toolset, a JSON Handler

Design is created that specifies the mapping between program data fields

and JSON nodes as well as the data validation rules that should be

applied. The Handler Design is then used to generate all the COBOL or C

source required to parse and create JSON. The code can be used in new

or existing programs – no runtimes required!

To see how JSON

Thunder works, click

here.

Thunder is the

solution of choice for developers and IT architects for processing XML

and JSON messages in COBOL and C. It’s model-driven approach frees up

developers’ time to focus their expertise on the business logic in their

programs while reducing the time to market for applications.

Thunder is now

available in three unique solutions.

JSON Thunder

for COBOL and C code creation.

XML Thunder

for COBOL, C and Java code creation.

Thunder Suite,

which packages both XML Thunder and JSON Thunder plus version

control for a truly comprehensive code generation solution.

Be sure to visit

Canam Software and TIC at BITUG 2018 in London, England on May 9th, and

at GTUG in Leipzig Germany May 14th to 16th. Schedule a

one-on-one

demo of Thunder today! Email

info@canamsoftware.com

or call (289) 719-0800.

______________________________________________________________________________

To start a

free 30-day trial of Thunder Lite and experience the power of a

model-driven approach to code creation that JSON and XML-enables COBOL,

C and Java programs, visit

www.jsonthunder.com

or

http://www.xmlthunder.com

CommitWork presentation “Using Alexa with your software environment“

at the European NonStop Hotspot 2018

CommitWork invites you to visit

the presentation

„Using Alexa with your software environment“ on May 15 at 3:30 PM (Room

Wittenberg).

Abstract:

Alexa is a virtual speech assistant

developed by Amazon.

Users can extend the Alexa capabilities by

developing a new 'skill'.

In this session, you get an introduction about how to develop an Alexa

Skill with Java and see how to integrate it into your existing software

environment.

www.commitwork.de

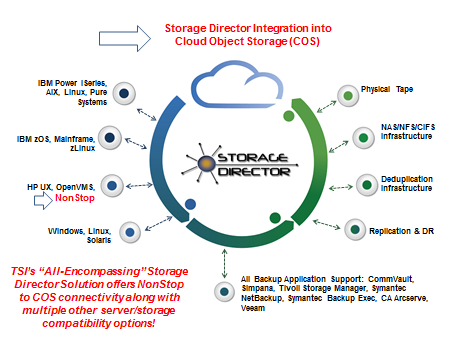

Considerations for Cloud

Object Storage

By Glenn Garrahan, Director HPE Business, Tributary

Systems

NonStop

and Cloud Object Storage:

Object Storage architecture

manages data as objects, unlike traditional file systems which manage

data as a file hierarchy or block storage which manages data as blocks

within sectors and tracks. Each object includes the data itself, some

amount of

metadata,

and a globally unique identifier. Object storage can be implemented at

multiple levels, including the object storage device level, the system

level, and/or the interface level. The advantage of object storage is it

enables capabilities not addressed by older storage architectures.

Examples may include interfaces that are directly programmable by the

application, a namespace that can span multiple instances of physical

hardware, and data management functions like data replication and data

distribution at object-level granularity.

There’s no doubt, the

future of archiving is object storage. Object storage is rapidly

replacing on premise tape, disk, and dedup disk technology as an

archival or backup methodology.

Massive amounts of

unstructured data may be retained efficiently by object storage as it is

ideal for purposes such as archiving medical imaging, photos, songs,

videos, etc.

This is

why Amazon S3, Google Cloud, MS Azure and all other public clouds use

object storage. Its flexibility, scalability, and cost are all

substantive advantages realized when retaining huge amounts of

unstructured data in the cloud. Object storage (outside of back up and

archive) can also be flexible with users being able to access data from

anywhere.

For

NonStop users in particular, there are definite considerations when

contemplating a move from legacy tape or disk archiving methodologies to

Cloud Object Storage. Generally these would fall into one of four

categories: Scalability, Performance, Cost and Security:

Scalability:

•

Dispersed storage technology, employing Information Dispersal

Algorithms, available with Cloud Object Storage, provides massive

scalability with significantly reduced administrative overhead.

•

Cloud

Object Storage can grow easily from terabytes to petabytes to exabytes,

and may be implemented on premise, or in public, private or hybrid

clouds.

•

These

advanced scalability capabilities are ideally suited to rapidly growing

data backup environments.

Performance:

o

With an

appropriate and compatible front end device, ingesting data from any

backup application including NonStop, TSM, Commvault, NetBackup, Veeam

can be optimized, this will allow rapid data ingestion and caching,

reducing backup windows while streaming the data policy based pools to

Cloud Object Storage at the back end.

•

With the

use of FlashSystem for the cache layer, backup data to Cloud Object

Storage at rates of up to 10.4 GB/sec or 37.4 TB/hour per node, and

restore data at rates of up to 9.6 GB/sec or 34.6 TB/hour are possible.

Cost:

o

Cloud

Object Storage typically delivers significantly lower total cost of

ownership for storage systems at the multiple petabyte level, reducing

or in many cases eliminating the need for data replication and the need

for multiple copies

o

Cloud

Object Storage is 55-60% of the cost per GB of archived data when

compared to any dedup VTL

o

Cloud

Object Storage may be purchased as a service (Storage as a Service, SaaS),

thereby eliminating the need to procure and maintain backup hardware on

premise or in remote DR sites. Storage capacity can be varied in the

short term to deal with peak periods, and increased over the long term

as a natural function of data growth.

Security:

•

Cloud

Object Storage is a highly secure object storage archival technology

that has been in the marketplace for 13 years.

•

The use

of Information Dispersal Algorithms (IDA), otherwise known as Erasure

Coding, in addition to AES 256 bit encryption, greatly enhances data

security.

–

IDA’s

separate data into unrecognizable “slices” that are then distributed via

network connection to storage nodes locally or across datacenters. Think

of it this way, if you store a classic Ferrari in a single garage, a

thief can break in, hotwire the car, and make off with it. If you

disassemble the Ferrari and store the components in multiple garages,

it’s very difficult, if not impossible, to steal and then reconstruct

the vehicle.

–

IDA

eliminates the need for data replication.

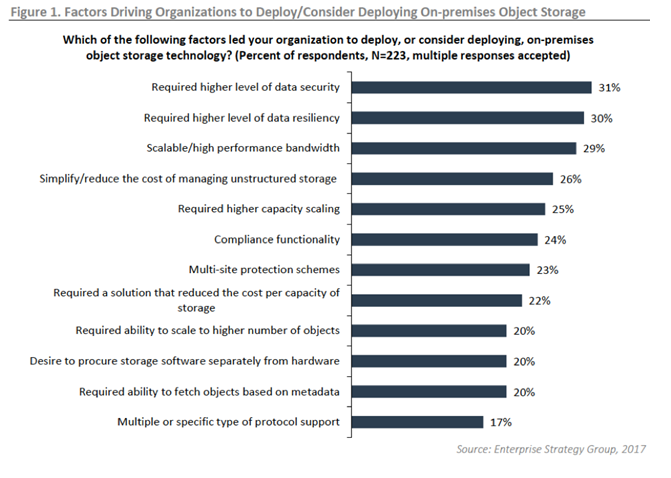

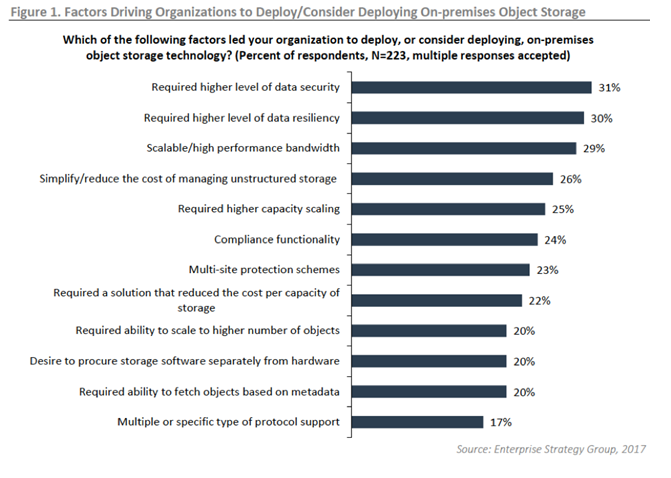

Interestingly, in a November

2017 report from Scott Sinclair, Hitachi Enterprise Storage Group Senior

Analyst, showed that the top factor that leads organizations to deploy

or consider deploying on-premises object storage technology is a higher

level of data security.

Forged

by Power and Partnership:

For

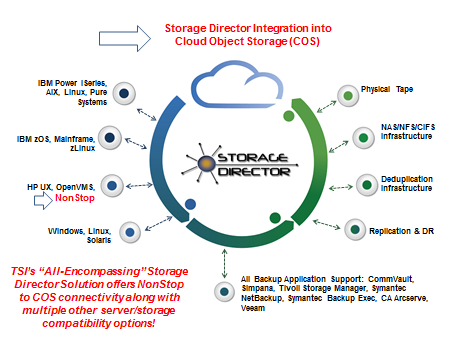

NonStop customers, a great answer is Tributary System’s Cloud Object

Storage Solution. Employing advanced COS technology coupled with

Tributary’s proven Storage Director® as the “front end”, NonStop

customers can transparently take advantage of IBM’s Cloud Object

Storage® (COS), formerly known as Cleversafe®, without any changes to

their NonStop applications. In addition,

Tributary’s

software-defined Storage Director high performance front end ingestion

and caching solution is now certified with Hitachi Content Platform (HCP).

Storage

Director, is a policy-based, tiered, and virtualized software product

especially designed for backup which can be seamlessly integrated with

any media, including tapes, disk drives, virtual environments and

NonStop or other proprietary environments, plus open systems. Storage

Director can group data into different pools and apply different

protection policies at different times across any storage medium

simultaneously. Tributary Systems has gained a massive strategic edge as

it has entered into a synergistic partnership with IBM to revolutionize

enterprise cloud data backup/restore, archive and DR. Combining the

capabilities of Storage Director while endorsing long-term archival to

Object Storage is where Tributary sees the data backup and retention

market evolving. Tributary is the only company in the marketplace that

can backup all NonStop mission-critical servers using a single solution.

In addition to Storage Director’s AES 256-bit encryption, data is also

erasure-coded in the storage tier. From a performance standpoint,

Tributary’s solution can ingest data at a rate of 12TB per hour and

restore at about 8.5TB per hour. Should a flash storage be used in the

cache layer, the ingestion rate goes up to 37TB per hour and restores at

35TB per hour; these are metrics that are unmatched in the market. Thus,

Tributary’s IP, when combined with leading Cloud Object Storage

solutions imparts exclusive cutting-edge data storage and management

capabilities that can be well extended beyond public cloud models—into

hybrid and on- premise environments—and also offers double-layered

security for NonStop clients. Finally, the Storage Director Cloud Object

Storage solution can be implemented with a monthly fixed cost model

unlike all public cloud providers such as AWS who charge customers for

accessing their data through additional fees for “puts and gets.”

Employing a cloud backup solution where costs vary widely from month to

month, based on access to their own data, is challenging for most

enterprise customers.

For more information visit

https://tributary.com/

Compliance

Issues When Transitioning into a Hybrid IT Infrastructure

Companies moving to a hybrid infrastructure for data centers face

complex challenges, so collaboration and preparation are key elements in

ensuring a smooth transition.

Hybrid architectures can be supported by integrated

solutions that create a seamless environment between existing

infrastructure resources and newer cloud deployments. Such solutions

allow organizations to regulate workloads and user access to fully

support security, governance and compliance requirements.

The Time for Innovation is Now

With companies needing to carry out a root and branch

review, they currently have a choice. Should they improve their systems

to comply and tick the boxes, or should they change to innovate? One

would argue that being "good enough" isn't actually good enough, as

customers place so much emphasis on the security of their data. Firms

should capitalize on the excellent opportunity to take stock of what

they have on their networks, as well as the policies that govern them.

Enterprises are warming to the idea of the hybrid cloud as a solution

that is secure, cost effective and suitable for mobile workforces.

General perception used to be that businesses ran on on-premise clouds

and used public clouds for application development. Now we're seeing

increased buy-in, with concerns around integration and performance put

to rest.

Things get more interesting from a GDPR perspective

with object storage thrown in the mix. Using this type of architecture

in a hybrid infrastructure allows for sensitive data sets to remain

on-site. Meanwhile, the less important data can be archived to the

public cloud. Object storage also adds control of data between clouds,

including public clouds such as AWS. There's also the benefit of

auditability and reliable control and management functionality.

Regardless of where it sits, data can be managed using a set of tools

and policies designed for hybrid platforms, tools such as CSP's

Protect-X®.

Protect-X® - The Compliance & Security Hardening

Solution Built for Hybrid IT

Protect-X® user interface

Protect-X® is a browser-based, automated security

compliance solution using the latest JavaScript technologies. It

supports HPE NonStop/X, Virtual NonStop and UNIX platforms. Wholly

developed by CSP, Protect X® is built using agent-less design so there

is nothing to install on your NonStop servers. All security is managed

off-platform, via very fast and very strong encrypted connections.

Because Protect-X® was built with Virtual NonStop

and open source applications in mind, it is the perfect tool to ensure

that your hybrid infrastructure is compliant and secure. Protect-X®

allows you to easily ensure compliance, assess risk and manage security

of your hybrid platforms.

Protect-X®:

·

Allows a single security compliance policy to be

automatically verified across hybrid systems, such as NonStop Servers

and Unix Servers

·

Allows delegation of tasks and makes security

compliance changes only after approval by the responsible administrator

Protect-X® is a powerful tool that has the ability

to automatically validate compliance policies across different

environments and IT architectures. It can be completely customized to

suit your specific needs. It places all the power in your hands, but

simplifies and automates many routine tasks.

Test Drive Protect-X® Here

For more information on CSP solutions visit

www.cspsecurity.com

For complimentary access to CSP-Wiki®,

an extensive repository of NonStop security knowledge and best

practices, please visit

wiki.cspsecurity.com

We Built the Wiki for NonStop Security

®

Regards,

The CSP

Team

+1(905) 568 -

8900

"

TIC News You

Can Use – NEW TOP Windows-Based GUI Webinar

"

TIC

Software, the leading provider of software solutions and consulting

services for HPE NonStop modernization; is pleased to announce the

addition of

TOP Windows-based GUI

from partner comforte to the firm’s offerings. TOP reduces complex tasks

to simple point-and-click operations to save time, increase

productivity, and reduce errors.

TOP will

be demonstrated in a

Webinar

highlighting the intuitive interface and features including:

-

Point-and-click Navigation

-

Extensive

OSS Support

-

Individual

Security Profiles

-

Command

Auditing and logging

-

Point-and-click Backup Facility

-

Secure SSL

Connectivity

-

And More…

Sign Up Today &

Experience the Next Generation GUI for NonStop!

Join our

Webinar this May

23,

2018 10:00 AM - 11:00 AM EST | Presenter: Phil Ly

Webinar: https://attendee.gotowebinar.com/register/5914340506292613890

More

information send us an email to:

sales-support@ticsoftware.comhttp://www.ticsoftware.com

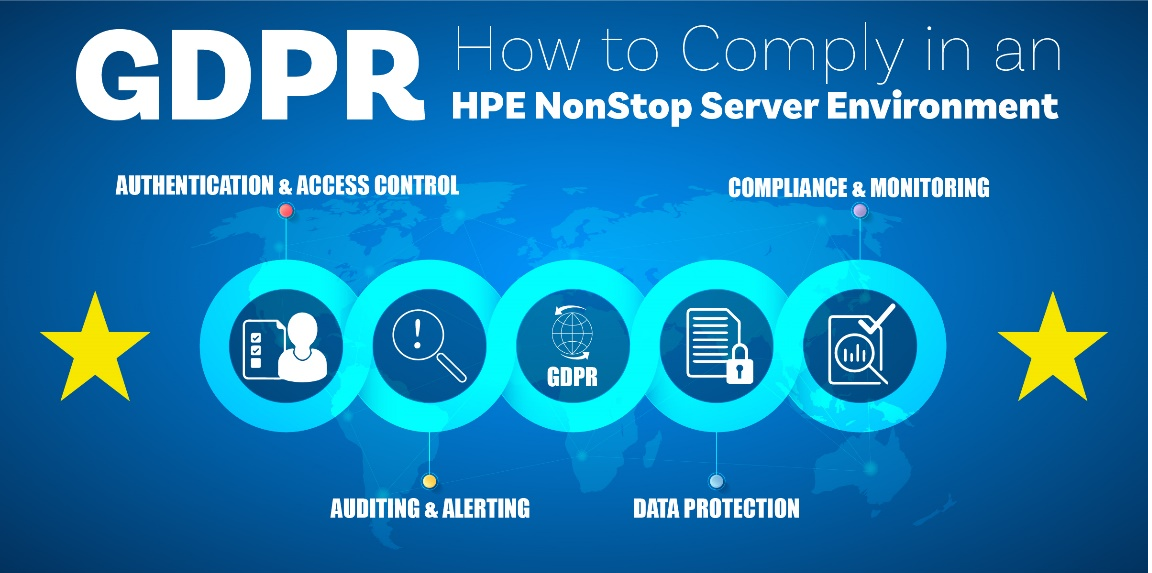

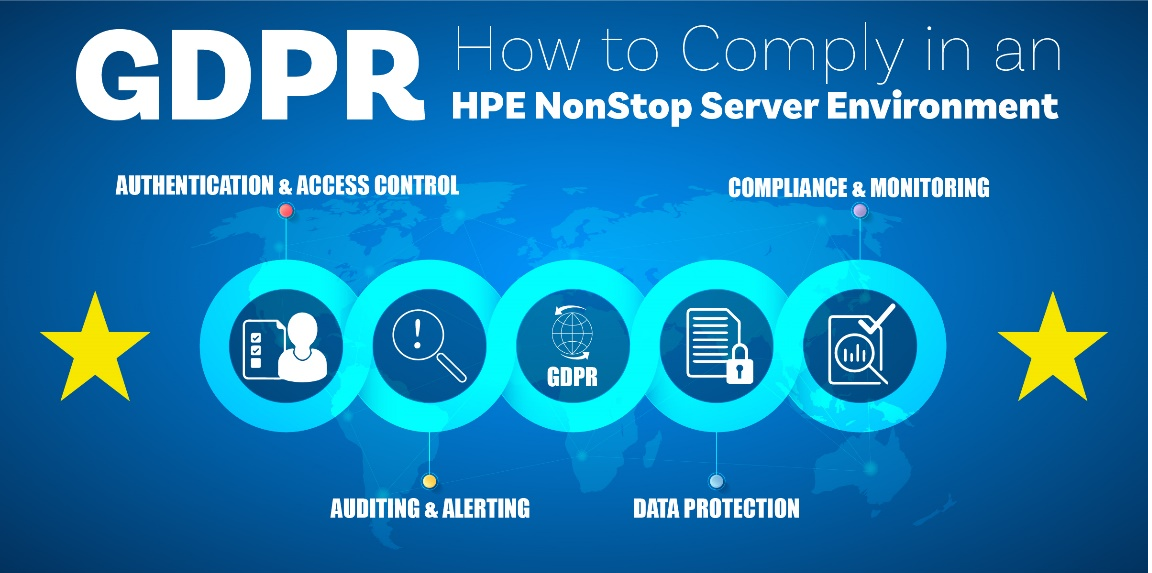

XYPRO - GDPR – How to Comply in an HPE NonStop Server

Environment

The General Data Protection Regulation (GDPR) is a new European Union

privacy law that takes effect May 25. This is the biggest change in

European data protection in decades. GDPR is not an EU only regulation;

it affects any business or individual handling the data of EU citizens,

regardless of where that business or individual is based.

Over 50% of organizations with EU citizen data haven’t implemented plans

to address GDPR or don’t think the privacy regulation applies to them.

Avoid a costly surprise. The attached article will walk

you through the fundamentals of GDPR preparation in an HPE NonStop

Server environment, right down to the tools and strategy necessary to

comply with these regulations. With less than 60 days left, NonStop GDPR

compliance should not be a daunting task.

Let XYPRO help.

Read More

XYPRO

Provides Identity Governance and Administration for HPE NonStop Servers

XYPRO and SailPoint Partner to Provide Identity Management for HPE

NonStop

XYPRO’s XIC solution simplifies requirements and compliance activities.

When an identity is disabled through SailPoint IdentityIQ, the

corresponding account is immediately disabled on all NonStop servers on

which the identity was provisioned. When that identity is removed using

IdentityIQ, the account is immediately removed from all NonStop servers,

ensuring the removal of stale accounts, improving your relationship with

your auditors and strengthening your security procedures at the same

time.

For

Additional Integrations Contact Your XYPRO Account Executive

Read More

XYPRO

looks forward

to seeing you at the upcoming shows!

BITUG Big SIG 2018May

9, 2018Trinity

House, Tower Hill, London

Event Website >

GTUG: European NonStop Hotspot 2018May

14, 2018 May 16, 2018The

Westin Hotel Leipzig, Leipzig, Germany

Event Website >

NENUG 2018May

21, 2018Andover,

MA

Event Website >

PCI Asia-Pacific 2018May

23, 2018 May 24, 2018Tokyo,

Japan

Event Website >

NYTUG 2018May

23, 2018Berkeley

Heights, NJ

Event Website >

N2TUG 2018June

7, 2018the

Hilton Dallas/Plano Granite Park in Plano

Event Website >

VNUG 2018September

11, 2018 September 12, 2018

Event Website >

ATUG 2018September

19, 2018

Event Website >

CTUG 2018September

26, 2018 September 27, 2018Hewlett–Packard

Enterprise Canada, 5150 Spectrum Way, Mississauga, Ontario

Event Website >

HPE NONSTOP SYSTEMS & LUSIS

PAYMENTS

Working

together. Accelerating results. A new style of partnering

Hewlett

Packard Enterprise and Lusis Payments are collaborating in a fresh new

way to bring increased value to customers like you.

We know

that acquiring technology is only the first step in achieving a business

goal. The technology pieces need to work together. They need to be

tested. They need to provide rich functionality, quickly and

effectively, so you can concentrate on your business needs.

To help

satisfy these needs, Lusis Payments is a member of the HPE Partner Ready

for Technology Partner program, an industry-leading approach to supply

sophisticated integrated technologies in a simple, confident, and

efficient manner.

Lusis

provides state of the art products and services to the payments

industry. Using micro-service architecture, Lusis brings a modern and

truly flexible proposition to the payments EcoSystem.

Lusis

Payments has access to the right tools, processes, and resources to help

our joint customers accelerate innovation and transformation that brings

value, achieves business needs, and increases revenue and market shar

Read the full article.

For more

information about TANGO contact Brian Miller at

brian.miller@lusispayments.com or

visit

http://www.lusispayments.com

Find out more about us at

www.tandemworld.net

Platinum Sponsor

Gold Sponsor

|

|

|